Posted by Dave Kleidermacher, Eugene Liderman, and Android and Made by Google security teams We believe that security and transparency are paramount pillars for electronic products connected to the Internet. Over the past year, we’ve been excited to see more focused activity across policymakers, industry partners, developers, and public interest advocates around raising the security and transparency bar for IoT products.

That said, the details of IoT product labeling - the definition of labeling, what labeling needs to convey in terms of security and privacy, where the label should reside, and how to achieve consumer acceptance, are still open for debate. Google has also been considering these core questions for a long time. As an operating system, IoT product provider, and the maintainer of multiple large ecosystems, we see firsthand how critical these details will be to the future of the IoT. In an effort to be a catalyst for collaboration and transparency, today we’re sharing our proposed list of principles around IoT security labeling.

Setting the Stage: Defining IoT Labeling

IoT labeling is a complex and nuanced topic, so as an industry, we should first align on a set of labeling definitions that could help reduce potential fragmentation and offer a harmonized approach that could drive a desired outcome:

- Label: printed and/or digital representation of a digital product’s security and/or privacy status intended to inform consumers and/or other stakeholders. A label may include both printed and digital representations; for example, a printed label may include a logo and QR code that references a digital representation of the security claims being made.

- Labeling scheme: a program that defines, manages, and monitors the use of labels, including but not limited to user experience, adherence to specific standards or security profiles, and lifecycle management of the label (e.g. decommissioning)

- Evaluation scheme: a program that publishes, manages, and monitors the security claims of digital products against security requirements and related standards; labeling schemes may rely on evaluation schemes to produce the information referred to in or by their labels.

Proposed Principles for IoT Security Labeling Schemes

We believe in five core principles for IoT labeling schemes. These principles will help increase transparency against the full baseline of security criteria for IoT. These principles will also increase competition in security and push manufacturers to offer products with effective security protections, increase transparency, and help generate higher levels of assurance of protection over time.

1. A printed label must not imply trust

Unlike food labels, digital security labels must be “live” labels, where security/privacy status is conveyed on a central maintained website, which ideally would be the same site hosting the evaluation scheme. A physical label, either printed on a box or visible in an app, can be used if and only if it encourages users to visit the website (e.g. scan a QR code or click a link) to obtain the real-time status.

At any point in time, a digital product may become unsafe for use. For example, if a critical, in-the-wild, remote exploit of a product is discovered and cannot be mitigated (e.g. via a patch), then it may be necessary to change that product’s status from safe to unsafe.

Printed labels, if they convey trust implicitly such as, “certified to NNN standard” or, “3 stars”, run the danger of influencing consumers to make harmful decisions. A consumer may purchase a webcam with a “3-star” security label only to find when they return home the product has non-mitigatable vulnerabilities that make it unsafe. Or, a product may sit on a shelf long enough to become non-compliant or unsafe. Labeling programs should help consumers make better security decisions. The dangers around a printed “trust me” label will in some circumstances, mislead consumers.

2. Labels must reference strong international evaluation schemes

The challenge of utilizing a labeling scheme is not the physical manifestation of the label but rather ensuring that the label references a security/privacy status/posture that is maintained by a trustworthy security/privacy evaluation scheme, such as the ones being developed by the Connectivity Standards Alliance (CSA) and GSMA. Both of these organizations are actively developing IoT security/privacy evaluation schemes that reference well-regarded standards, including recent IoT baseline security guidance from NIST, ETSI, ISO, and OWASP. Some important requirements for evaluation schemes leveraged by a national labeling program include:

Strong governance: The NGO must have strong governance. For example, NGOs that house both a scheme and their own in-house evaluation lab introduce potential conflicts of interest that should be avoided.

Strong track record for managing evaluation schemes at scale: Managing a high quality, global scheme is hard. National authorities have struggled at this for many years, especially in the consumer realm. An NGO that has no prior track record of managing a scheme with significant global adoption is unlikely to be sufficiently trustworthy for a national labeling scheme to reference. CSA and GSMA have long track records of managing global schemes that have stood the test of time.

Choice with a high quality bar: The world needs a small set of high quality evaluation schemes that can act as the hub within a hub and spoke model for enabling national labeling schemes across the globe. Evaluation schemes will authorize a range of labs for lab-tested results, providing price competition for lab engagements. We need more than one scheme to encourage competition among evaluation schemes, as they too will levy fees for membership, certification, and monitoring. However, balance is key, as too many schemes could be challenging for governments to monitor and trust. Setting a high bar for governance and track record, as described above, will help curate global evaluation scheme choices.

International participation: National labeling schemes must recognize that many manufacturers sell products across the world. A national label that does not reference NGOs that serve the global community will force multiple inconsistent national labeling schemes that are prohibitively expensive for small and medium size product developers. Misaligned or non-harmonized national efforts may become a significant barrier to entry for smaller vendors and run counter to the intended goals of competition-enhancing policies in their respective markets.

Assurance maintenance: The NGO evaluation scheme must provide a mechanism for independent researchers to pressure test conformance claims made by manufacturers. Moment-in-time certifications have historically plagued security evaluation schemes, and for cost reasons, forced annual re-certifications are not the answer either. For the vast majority of consumer products, we should rely on crowdsourced research to identify weaknesses that may question a certification result. This approach has succeeded in helping to maintain the security of numerous global products and platforms and is especially needed to help monitor the results of self-attestation certifications that will be needed in any national scale labeling program. This is also an area where federal funding may be most needed; security bounty programs will add even more incentive for the security community to pressure test evaluation scheme results and hold the entire labeling program supply chain accountable. These reward programs are also a great way to recruit more people into the cybersecurity field.

3. A minimum security baseline must be coupled with flexibility above it

A minimum security baseline must be coupled with flexibility to define additional requirements and/or levels to accelerate ecosystem improvements. Security labeling is nascent, and most schemes are focused on common sense baseline requirement standards. These standards will set an important minimum bar for digital security, reducing the likelihood that consumers will be exposed to truly poor security practices. However, we should never say things like, “we need a labeling scheme to ensure that digital products are secure.” Security is not a binary state. Applying a minimum set of best practices will not magically make a product free of vulnerabilities. But it will discourage the most common security foibles. Furthermore, it is folly to expect that baseline security standards will protect against advanced persistent threat actors. Rather, they’ll hopefully provide broad protection against common opportunistic attackers. The Mirai botnet attack was so successful because so many digital products lack the most rudimentary security functionality: the ability to apply a security update in the field.

Over time we need to do better. Security evaluation schemes need to be sufficiently flexible to allow for additional security functional requirements to be measured and rated across products. For example, the current baseline security requirements do not cover things like the strength of a biometric authenticator (important for phones and a growing range of consumer digital products) nor do they provide a standardized method for comparing the relative strength of security update policies (e.g. a product that receives regular updates for five years should be valued more highly by consumers than one that receives updates for two years). Communities that focus on specific vertical markets of product families are motivated to create security functional requirement profiles (and labels) that go above and beyond the baseline and are more tailored for that product category. Labeling schemes must allow for this flexibility, as long as profile compliance is managed by high quality evaluation schemes.

Similarly, in addition to functionality such as biometrics and update frequency, labels need to allow for assurance levels, which answer the question, “how much confidence should we have in this product’s security functionality claims?” For example, emerging consumer evaluation schemes may permit a self-attestation of conformance or a lab test that validates basic security functionality. These kinds of attestations yield relatively low assurance, but still better than none. Today’s schemes do not allow for an assessment that emulates a high potential attacker trying to break the system’s security functionality. To date, due to cost and complexity, high potential attacker vulnerability assessments have been limited to a vanishingly small number of products, including secure elements and small hypervisors. Yet for a nation’s most critical systems, such as connected medical devices, cars, and applications that manage sensitive data for millions of consumers, a higher level of assurance will be needed, and any labeling scheme must not preclude future extensions that offer higher levels of assurance.

4. Broad-based transparency is just as important as the minimum bar

While it is desirable that labeling schemes provide consumers with simple guidance on safety, the desire for such a simple bar forces it to be the lowest common denominator for security capability so as not to preclude large portions of the market. It is equally important that labeling schemes increase transparency in security. So much of the discussion around labeling schemes has focused on selecting the best possible minimum bar rather than promoting transparency of security capability, regardless of what minimum bar a product may meet. This is short-sighted and fails to learn from many other consumer rating schemes (e.g. Consumer Reports) that have successfully provided transparency around a much wider range of product capabilities over time.

Again, while a common baseline is a good place to start, we must also encourage the use of more comprehensive requirement specifications developed by high-quality NGO standards bodies and/or schemes against which products can be assessed. The goal of this method is not to mandate every requirement above the baseline, but rather to mandate transparency of compliance against those requirements. Similar to many other consumer rating schemes, the transparency across a wide range of important capabilities (e.g. the biometrics example above) will enable easy side-by-side comparison during purchasing decisions, which will act as the tide to raise all boats, driving product developers to compete with each other in security. This already happens with speeds and feeds, battery life, energy consumption, and many other features that people care about. For example, the requirement for transparency could classify the strength of the biometric based on spoof / presentation attack detection rate, which we measure for Android. If we develop more comprehensive transparency in our labeling scheme, consumers will learn and care about a wider range of security capabilities that today remain below the veil; that awareness will drive demand for product developers to do better.

5. Labeling schemes are useless without adoption incentive

Transparency is the core concept that can raise demand and improve supply of better security across the IoT. However, what will cause products to be evaluated so that security capability data will be published and made easily consumable? After thirty years of the world wide web and connected digital technology, it is clear that simply expecting product developers to “do the right thing” for security is insufficient.

“Voluntary” regimes will attract the same developers that are already doing good security work and depend on doing so for their customers and brands. Security is, on average, poor across the IoT market because product developers optimize for profitability, and the economic impact of poor security is usually not sufficiently high to move the needle. Many avenues can lead to increased economic incentives for improved security. That means a mix of carrots and sticks will be necessary to incentivize developers to increase the security of their products.

National labeling schemes should focus on a few of the biggest market movers, in order of decreasing impact:

National mandate: Some national governments are moving towards legislation or executive orders that will require common baseline security requirements to be met, with corresponding labeling to differentiate compliant products from those not covered by the mandate. National mandates can drive improved behavior at scale. However, mandating a poor labeling scheme can do more harm than good. For example, if every nation creates a bespoke evaluation scheme, small and medium size developers would be priced out of the market due to the need to recertify and label their products across all these schemes. Not only will non-harmonized approaches harm industry financially, it will also inhibit innovation as developers create less inclusive products to avoid nations with painful labeling regimes.

National mandates and labeling schemes must reference broadly applicable, high quality, NGO standards and schemes (as described above) so that they can be reused across multiple national labeling schemes. Global normalization and cross-recognition is not a nice-to-have, national schemes will fail if they do not solve for this important economic reality upfront. Ideally, government officials who care about a successful national labeling scheme should be involved to nurture and guide the NGO schemes that are trying to solve this problem globally.

Retailers: Retailers of digital products could have a huge impact by preferencing baseline standards compliance for digital products. In its most impactful form, the retailer would mandate compliance for all products listed for sale. The larger the retailer, the more impact is possible. Less broad, but still extremely impactful, would be providing visual labeling and/or search and discovery preferences for products that meet the requirements specified in high quality security evaluation schemes.

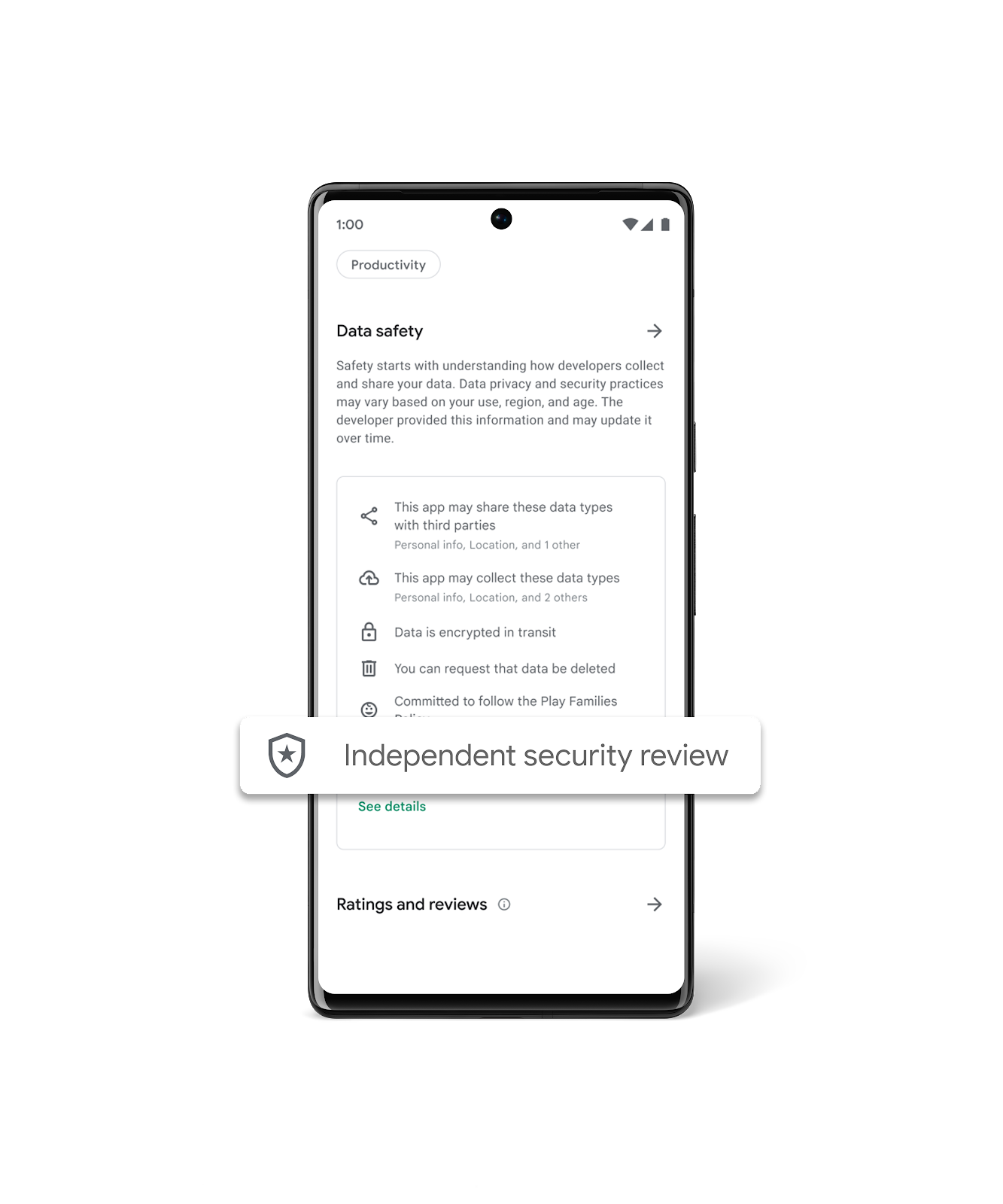

Platform developers: Many digital products exist as part of platforms, such as devices built on the Android Open Source Project (AOSP) platform or apps published on the Google Play app store platform. In addition, interoperability standards such as Matter and Bluetooth act as platforms, certifying products that meet those interoperability standards. All of these platform developers may use security compliance within larger certification, compliance, and business incentive programs that can drive adoption at

scale. The impact depends on the size and scale of the platform and whether the carrots provided by platform providers are sufficiently attractive.

Continuing to Strive For Collaboration, Standardization, and Transparency

Our goal is to increase transparency against the full baseline of security criteria for the IoT over time. This will help drive “competition” in security and push manufacturers to offer products with more robust security protections. But we don’t want to stop at just increasing transparency. We will also strive to build realistic higher levels of assurance. As labeling efforts gain steam, we are hopeful that public sector and industry can work together to drive global harmonization to prevent fragmentation, and we hope to provide our expertise and act as a valued partner to governments as they develop policies to help their countries stay ahead of the latest threats in IoT. We look forward to our continued partnership with governments and industry to reduce complexity and increase innovation while improving global cybersecurity.

---------------------------------------------------------------------------------------------

See also: Google testimony on security labeling and evaluation schemes in UK Parliament

See also: Google participated in a White House strategic discussion on IoT Security Labeling